Upgrading a SharePoint farm can reveal hidden issues that may not be causing any visible consequences in your current environment. This was especially the case in a recent customer visit to assist with an upgrade from SharePoint 2007 to SharePoint 2010. During the upgrade we encountered the error “Failed to create field: Field type <field name> is not installed properly” while attempting to upgrade hundreds of SharePoint lists and document libraries. The issue my customer faced related to a single 3rd party company’s custom field which I have removed to keep confidentiality. Below is a recap of what we encountered and how we worked around the issue. I am also making available the PowerShell script I wrote to find all instances of a field name in a web application.

Problem

The field that was causing the issue was a hidden column (SPFIeld.Hidden = true, MSDN link) that was installed by a 3rd party solution the customer no longer wanted to use. The customer had uninstalled the 3rd party WSP file, but the custom field still existed on hundreds of lists and document libraries. Unfortunately because the column was hidden it didn’t show up in the list settings page.

When the customer tried to upgrade the environment they had an entry in the ULS logs with the below information. The message is the key piece of information.

| Area |

SharePoint Foundation |

| Category |

Fields |

| Event ID |

8l1l |

| Level |

High |

| Message |

Failed to create field: Field type <field name removed> is not installed properly. Go to the list settings page to delete this field. |

| Correlation ID |

<correlation ID> |

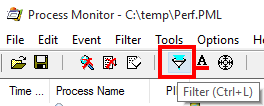

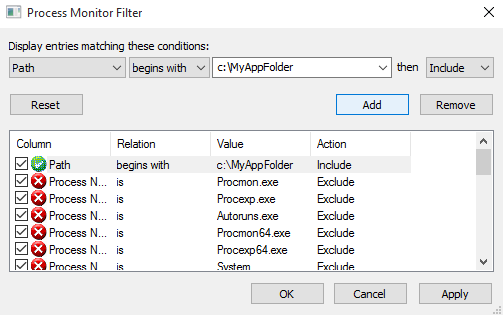

As the error message stated we could go to the list settings page to delete the field. Unfortunately the error message (and the next ULS entry with the stack trace) didn’t specify which lists contained the problematic field. I thought it best to use PowerShell to traverse the thousands of lists and libraries in the environment to find which ones contained a field matching the problem field name.

In order to do this I repurposed an script I had written to display all site collection administrators in a web application. I thought finding instance of the field name would be as easy as calling SPList.Fields.Contains(“field name looking for”) but I was wrong. The problem was that the SPFieldCollection enumerator was not able to successfully enumerate the fields on any lists that contained the problematic field. I received an error similar to what I saw in the ULS logs about the problematic field not installed properly.

Receiving an error was not entirely useless though. I knew that receiving an exception while enumerating the list fields would point me to the lists that I needed to correct. I decided to use the Try / Catch construct to capture the exception and add that list (and it’s parent SPWeb) to an array of results. Below is a slightly modified version of the script that I ended up using. You can download the script below as well.

###############################################################

#SP_Display-InstancesOfErroringFieldNameInWebApp4.ps1

#

#Author: Brian T. Jackett

#Last Modified Date: Dec. 7, 2011

#

#Traverse the entire web app site by site to display

# instances of a field name on lists. Expectation is that

# enumeration of list will produce an error that can be

# captured. Does not work against external lists at this time.

###############################################################

$url = read-host -Prompt "Enter a web application URL"

$result = @()

Start-SPAssignment -Global

$webApp = Get-SPWebApplication $url

foreach($site in $webApp.Sites)

{

write-debug "Site: $($site.url)"

foreach($web in $site.allwebs)

{

write-debug " Web: $($web.name)"

foreach($list in $web.lists)

{

try

{

#if an error occurs when enumerating through the list fields then a result

$list.Fields | out-null

}

catch

{

write-debug " List Error: $($list.title)"

#disable allowing multiple content types on list (used later in blog post)

$list.ContentTypesEnabled = $false

$list.Update()

#store the web URL and list title with the erroring field

$result += @{$($web.url)=$($list.title)}

}

}

}

}

write-output "****Results****"

$result

Stop-SPAssignment -Global

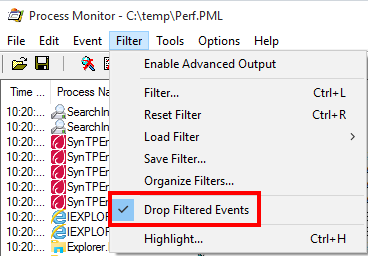

The output of the script showed that the customer had hundreds of lists and libraries that contained the problematic field. Since this was affecting hundreds of lists I thought it would be more efficient to use PowerShell to loop through all lists that were experiencing this issues and remove the field using the server Object Model API for SPField.Delete(). Unfortunately when I used that API method I received an error message stating that it is not possible to remove a SharePoint field that is hidden. Frustration ensued.

Solution

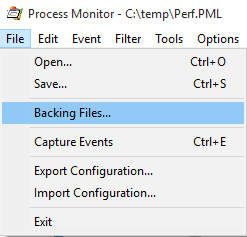

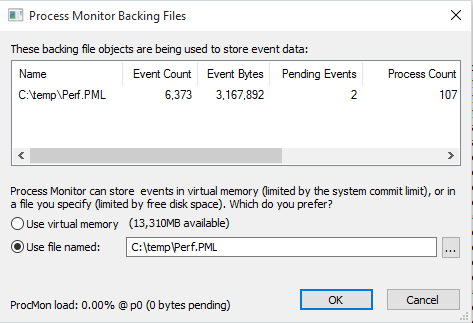

Since programmatic deletion of the field was not possible I resorted to following the error message instructions of removing them by hand on the list settings page. I was able to delete the field from about 20% of the lists using this method (see following screenshot.)

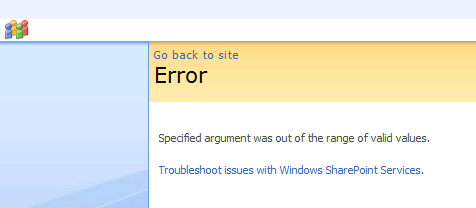

The remaining 80% of the problematic lists I received an error page stating that the list settings page was not viewable because the problem field was not installed properly. More frustration ensued.

At this point I looked for a pattern between lists that I could view the settings pages and those that I could not view. One pattern did emerge. Lists that I could view did not allow multiple content types. Lists that I could not view had the setting for “Allow management of content types” set to Yes (see following screenshot.)

Now because I was not able to view the list settings page I couldn’t turn off management of content types through the UI. I was able to modify the setting using PowerShell and the server Object Model API for SPList.ContentTypesEnabled though. I updated my above script to include the below lines.

$list.ContentTypesEnabled = $false

$list.Update()

After the lists were updated to disallow multiple content types I was then able to view the list settings page through the UI. This allowed us to clean up an additional 75% of the lists. Sadly there were a few dozen lists that were still unable to view the list settings page. For those isolated list instances it was decided to either migrate content from the existing 2007 farm by hand (download and re-upload) or have users delete and recreate the content.

Conclusion

In order to fix the lists the contained the problematic field we had to go through the UI to manually remove the field. To get a listing of the affected lists I used the PowerShell script above to find all lists that could not enumerate through their fields. Additionally most lists required that I disabled multiple content types on the lists. This may be an unacceptable option in your environment but that was an acceptable loss in this environment. Beyond that there were still lists that were not able to be saved in their current form.

Whenever you install or use any 3rd party solutions know that there can be risks associated with them. Despite my customer having uninstalled the solution and tested the migration previously this issue still occurred. With all of the support staff involved we ended up losing days worth of time due to this problematic field. Hopefully if you run into this same scenario you can use this audit script and processes to troubleshoot your issue more quickly.

-Frog Out