Now this is a topic that really excites me. It combines two things I love: automation and SharePoint. At my current client we are in the process of moving our custom SharePoint applications to the production environment. As we are moving to production, that means that we develops have less handle on the implementations be they databases, code migration, etc. To ease the load on the infrastructure team who is implementing our custom application I took the liberty of automating as much of the process as possible. The goal I set for myself was to take a base SharePoint farm (bits installed and Central Administration site running) all the way to a fully functioning production farm in less than 1 hour. Here are a few numbers to give you an idea of the scope of this endeavor.

- 5 distinct custom applications

- 1 web application with additional extension for forms based authentication

- 15+ custom and 3rd party WSPs

- 1 root site collection

- 4 subsites (all with unique security settings)

Additionally we have some custom databases, stored procedures, and database related pieces that are also deployed, but since that is technically outside of the standard SharePoint realm I won’t be touching on that. Ok, now that you have any idea of the scope of this implementation let’s get down to what all this entails, why you would consider automating your deployment, and some of the lessons learned from my experience.

First, what can you use to automate your deployment? There are a number of tools available each having their own pros and cons. Here are a few options and brief analysis.

- STSADM.exe – this is an out of the box provided command line tool for performing certain administrative tasks. STSADM can perform a number of operations that aren’t available through the SharePoint UI, but additionally since it is a command line tool can be put into batch scripts for running multiple commands consistently. I typically script commands into either a .bat file or a PowerShell script (our next focus.)

- PowerShell – I’ll say this now and I’ll say it again as many times as needed: If you are a Windows/SharePoint admin/developer/power user and haven’t begun to learn to use PowerShell, make this a high priority. In a previous post I talked about how important PowerShell is going to be going forward with any Microsoft technology. Just look at the fact that it is built-in (re: can’t be uninstalled) from Windows 7 and Server 2008 R2. That aside, PowerShell let’s you run STSADM commands, SharePoint API calls, or even SharePoint web service calls. In v2 you’ll be able to run remote commands, debug scripts, and have access to a host of other new functionality bits.

- Team Foundation Server – I have not personally had much of a chance to look into this process aside from reading a few articles. Essentially what you can do is have automated builds run from your Team Foundation Server and be able to track and analyze deployments in one integrated environment. For very large or well disciplined organizations this seems like a good progression step.

- 3rd party product – this could range anywhere from a workflow product able to run command line calls to a task scheduler product able to schedule batch scripts. Again these would most likely rely on STSADM or one of the SharePoint APIs. The ability to schedule scripts or have them fire according to workflow logic may be something useful in your organization depending on size and needs.

Second, why automate deployments? Won’t you spend as much time developing the deployment scripts as manually clicking the buttons or typing the commands? This is a conversation best discussed with the team doing the development and the team doing the deployment. If both happen to be the same team or your organization is very small perhaps the benefit of automation won’t be worthwhile (in the short term.) For larger organizations (and in the long term) I would always recommend automated deployments. There are a number of reasons including having a repeatable process, reducing “fat fingered” commands, reducing deployment time per environment, and reducing need for developer to be on hand during implementation among others. On the flipside there is the added time for developing the scripts and added difficulty debugging scripts. Taken together your organization should decide what works best for you.

Third, what’s the catch? No one gets a free lunch (unless you somehow do get free lunches regularly, then give me a call and share your secret) so there must be something that manual has an advantage over automated. Due to the very complex nature of our current deployment there are a few items that automation actually produces unintended results. Here are a few to note.

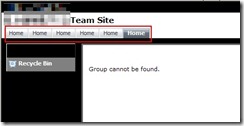

- Deploying site definition as a subsite does not inherit top link bar properly. See the pictures below. Essentially we are seeing the top link bar fill with links to the subsite home page (first picture) instead of using the parent site’s top link bar (second picture.) As a result we are forced to manually create our subsites. Luckily using custom site definitions handles the heavy lifting of this process.

- In a farm that has multiple web front ends, activating features that have multiple updates to the web.config (read this article, excellent background) can cause issues. The requests to update the web.config on each web front end will be sent out, but only one request can be fulfilled at a time. As we have about 5+ features that fall into this category they back up and do not complete. We have not found a good mechanism for delaying sending requests until the previous has completed. In time with more practice we may find a way to fine tune this so that is no longer an issue.

- When deploying sites/subsites from a site definition, any web part connections (one web part sending data to another web part) must be manually configured. So far that I have seen web part connections cannot be contained in the onet.xml site definition (instantiation vs. declaration assuming) but I have a hunch that you could create a simple feature that’s sole purpose is to contact the web part manager and create web part connections. I have not had the time to test this out, but it sounds plausible. If anyone has thoughts or suggestions on this topic please leave feedback below.

- Also when deploying sites/subsites from a site definition, some features cannot be properly activated during site creation time. A good example is a custom feature that creates additional SharePoint groups on your site. This is a tough one to describe, but visualize a chicken and an egg (yes, chosen for comedic sake.) The chicken is your new site and the egg is a SharePoint group created by a feature activated on (born from) your site (your chicken). When you create your new site (chicken) you are calling for features to also be activated (group created/egg born). Since the egg comes from the chicken, it can’t possibly be called while the chicken itself is being born. This leads to an error and the egg never gets born then. Instead you must manually (or in a later script step) activate the feature that creates this group. I hope this one didn’t thoroughly confuse you. Just know that some features might not be able to be activated at site creation time.

If you were paying attention at the beginning you may be asking yourself “great overview on automating your install Brian, but how close did you get to your goal of a 1 hour deployment?” We don’t have accurate measurements of the deployment time previously as our development environments grew organically at first and were slowly added to. I would estimate that rebuilding from scratch by hand would take at least 3+ hours and constant babysitting by the implementer. With the new process we are able to deploy the entire base farm as described above in roughly 30-45 mins. On top of that, about 70% of that time is spent just waiting for the WSP files to be deployed to the farm. As an added bonus, the number of clicks and lines typed is drastically reduced (I would rough estimate by 70% and 90% respectively.)

So there you have it, a quick intro/lessons learned for automating a SharePoint deployment. For a much more example-based and in depth article please read this Automate Web App Deployment with SharePoint API. I have referenced it many times when doing deployments and it covers just about everything from deploying lists to updating web.config to installing SharePoint. If you have any questions, comments, or suggestions please feel free to leave them. If there are any requests I can give a basic outline of what goes into my deployment scripts or how to architect them. Thanks for reading.

-Frog Out

Originally posted on: https://briantjackett.com/archive/2009/08/11/lessons-learned-from-automating-a-sharepoint-deployment.aspx#484378Brian, you made valid points and I have stumbled across similar issues, thus making automation actually impossible except for some simple tasks like solution deployment and the like.What we do most of the time is the following: once testing is complete, we move the content DB from the staging to the production environment and redeploy the solutions. Experience has shown that this gives us almost no headaches. Some may want to rely on the content deployment API, custom site definitions and/or hundreds of lines of PowerShell scripts. Yet, in most cases moving the content DB along with solution deployment does the trick.

LikeLike

Originally posted on: https://briantjackett.com/archive/2009/08/11/lessons-learned-from-automating-a-sharepoint-deployment.aspx#484400Steve, Glad to see I’m not alone in issues of automated deployment. I do have a question though. If you are migrating your content DB up the chain (towards production) aren’t you losing what data may have been in the destination environment? Prior to launching a production environment this seems acceptable, but once you go live with production you may need to keep data in that environment (as is our case) if it is an interactive application storing data from the users. Thoughts?

LikeLike

Originally posted on: https://briantjackett.com/archive/2009/08/11/lessons-learned-from-automating-a-sharepoint-deployment.aspx#484738Pretty good post.Have you tried using timer jobs to backup the misbehaving SPWebConfigModification updates?I was able to provision web part connections through a feature receiver. If interested I can blog about it but its been done.Use a combo move. We have features with receivers and we have site definitons with provisioners. If you have the provisioner create the groups before you apply web template, the features won’t activate until after.

LikeLike

Originally posted on: https://briantjackett.com/archive/2009/08/11/lessons-learned-from-automating-a-sharepoint-deployment.aspx#484766Brian B., Thanks for the advice. I haven’t used timer jobs before except when deploying WSPs at a specific time. As for web part connections yes I’ve thought about using a feature receiver, just haven’t gotten time to test it out. If you have any links to resources on that I would be appreciative. As for the groups, what do you mean by a site def with provisioner? I’d be interested to hear more about that.

LikeLike

Originally posted on: https://briantjackett.com/archive/2009/08/11/lessons-learned-from-automating-a-sharepoint-deployment.aspx#528038nice artical,thanks for share

LikeLike